Has Ed Tech Reached a Tipping Point?

Technology has become a constant presence in modern classrooms. Devices are everywhere. Students read on screens, watch videos, complete assignments online, and often move from one digital platform to another throughout the day. For years, the assumption behind this shift has been simple. More technology means better learning. Two recent pieces of writing challenge that assumption in important ways, and as an academic at heart, I LOVE reading books and articles like this. It is SO important to challenge norms in schools to ensure that the students in our classrooms are getting the best possible education they can. One of those books, which I recently read cover to cover, is Dr. Jared Cooney Horvath’s book The Digital Delusion. The other is a recent New York Times article about growing concern among parents over the amount of screen time students experience during the school day. Together they raise a fundamental question for educators. If technology is so widespread in schools, is it actually helping students learn? Have we reached the ed tech tipping point - one where the benefits are outweighed by the drawbacks?

Dr. Jared Cooney Horvath is a neuroscientist who studies how people learn. In The Digital Delusion, he argues that the widespread use of digital devices in classrooms often works against the very learning outcomes schools are trying to achieve. Drawing on research in neuroscience and cognitive science, he suggests that many of the assumptions driving classroom technology adoption are simply incorrect. Horvath’s central argument is not that technology is inherently bad. Instead, he believes schools have embraced it too quickly and without sufficient evidence. According to his research, learning is deeply connected to physical experience, movement, and sustained attention. Digital tools, by contrast, are often designed to keep users engaged through constant stimulation, fast transitions, and short bursts of content. These design features may be excellent for entertainment, but they can conflict with how the brain builds deep understanding. Real learning tends to require slow thinking, concentration, and repetition. When students are constantly switching between apps, videos, and notifications, their attention becomes fragmented.

Horvath also argues that digital environments sometimes weaken memory formation. Research has shown that physical materials such as books provide spatial cues that help the brain organize information. Screens often remove those cues, making it harder for students to build the mental structures needed for long term learning. Another concern raised in the book is the shift toward passive learning. Many classroom technologies position students primarily as consumers of information rather than active participants in creating knowledge. Watching a video or clicking through slides may deliver information quickly, but it does not necessarily produce the kind of cognitive effort required for deep understanding. For Horvath, the solution is not to abandon technology entirely. Instead, he calls for a return to approaches that place human interaction, physical engagement, and purposeful practice at the center of learning.

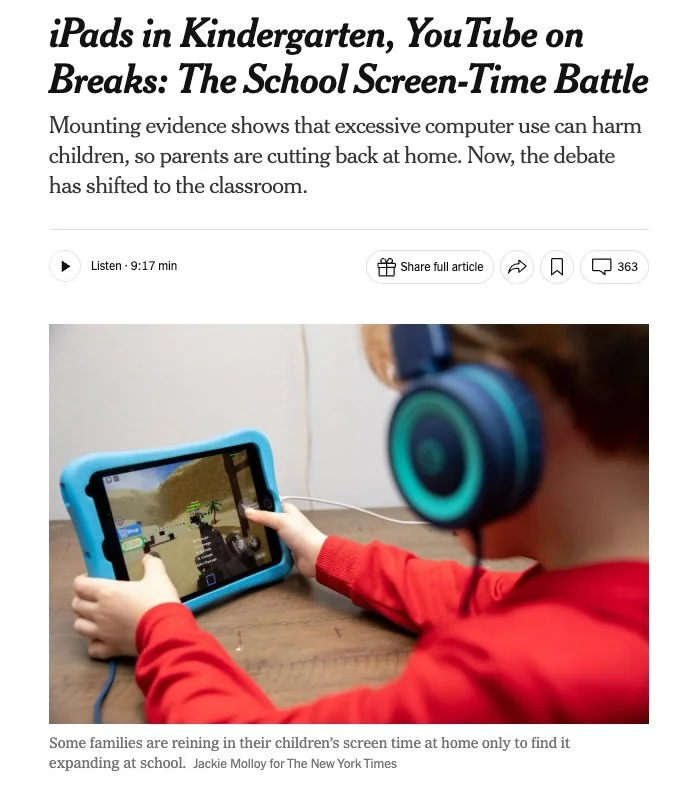

These ideas are echoed in a recent New York Times article about screen use in schools. Across the United States, many parents are beginning to question how much time students spend on devices during the school day. The article describes classrooms where tablets or laptops are used for a wide range of activities, sometimes including watching videos during snack time or transitions between lessons. Parents who expected limited device use were surprised to find that screens appear frequently throughout the day. Some families say the amount of screen time feels excessive, especially for younger students. In one example, parents discovered that their kindergarten child was exposed to advertisements and videos during school activities. For families that had carefully limited technology use at home, the shift was unsettling.

These concerns reflect a broader cultural shift. After a period when digital learning tools were widely embraced, many educators and parents are beginning to ask whether the balance has tipped too far toward screens. The pandemic accelerated device use in classrooms, and in many schools those practices have continued even after in person learning resumed. For many others, there was a conscious shift away from incorporating any type of technology into the classroom - perhaps a digital detox. Importantly, most critics are not arguing that technology should disappear from education. Instead, they are asking a different question. What kind of technology use actually supports learning rather than distracting from it? Outside of schools, it seems to me that kids (and their parents) have devices in their hands all the time. As a society, we are addicted to our phones. Though this might be an unpopular opinion, maybe parents have just as big a role in the overuse or misuse of ed tech in schools. Rather than letting kids go on their devices 24/7 at home, parents should encourage their kids to do more analogue activities - sports, hiking, dance class, playing outside, and yes - maybe even some private music lessons! Limiting screen time outside of school is just as important to this argument.

This distinction between active and passive learning is central to the conversation. Passive technology use often places students in the role of viewer or listener. They watch instructional videos, scroll through digital textbooks, or complete automated quizzes. These activities can deliver information efficiently, but they do not always require the student to think deeply or create something new. Active learning is different. Students perform tasks, practice skills, make decisions, and receive feedback. The focus shifts from consuming information to doing something meaningful with it.

For music education, this difference is particularly important. Music is not learned primarily by watching or reading. It is learned by actively making music. Students must sing, play instruments, compose, listen carefully, and reflect on what they hear. When technology becomes a substitute for those experiences, learning suffers. When technology supports those experiences, it can become a powerful tool. This is where the design philosophy behind MusicFirst becomes relevant. The goal of MusicFirst is not to place students in front of screens simply to watch or read. Instead, the platform is built around tools that encourage students to actively create and perform music.

Programs such as Soundtrap, YuStudio and OGenPlus allow students to record, edit, and produce their own music. Rather than passively listening to a finished recording, students build musical ideas track by track. They experiment with rhythm, melody, and harmony while learning the fundamentals of music production. Notation tools like Flat and Noteflight ask students to compose and arrange music themselves. Writing a melody or building a chord progression requires students to apply theoretical knowledge in a concrete way. The process turns abstract concepts into practical decisions. Assessment tools such as PracticeFirstpowered by MatchMySound and Sight Reading Factory focus on performance. Students must play or sing musical examples and submit recordings for feedback. This kind of activity mirrors the real practice process musicians use every day. Even music theory platforms like Auralia and Musition emphasize interaction. Students identify intervals, build chords, analyze harmony, and respond to musical examples. Each activity requires active listening and decision making rather than passive viewing.

MusicFirst Elementary follows the same philosophy but places the classroom teacher firmly at the center of the learning experience. Personally, I believe that using educational technology in an elementary school music classroom requires the most thought around the how and why for student engagement, rather than having students passively consume content. The curriculum is designed to support active music making in the classroom, encouraging students to sing, move, dance, create, and perform together. Digital resources help teachers guide instruction, but they do not replace the teacher or turn students into passive viewers of videos or players of isolated games. Lessons are structured so that technology supports what the teacher is doing in the room rather than distracting from it. The focus remains on musical participation and joyful music making with classmates.

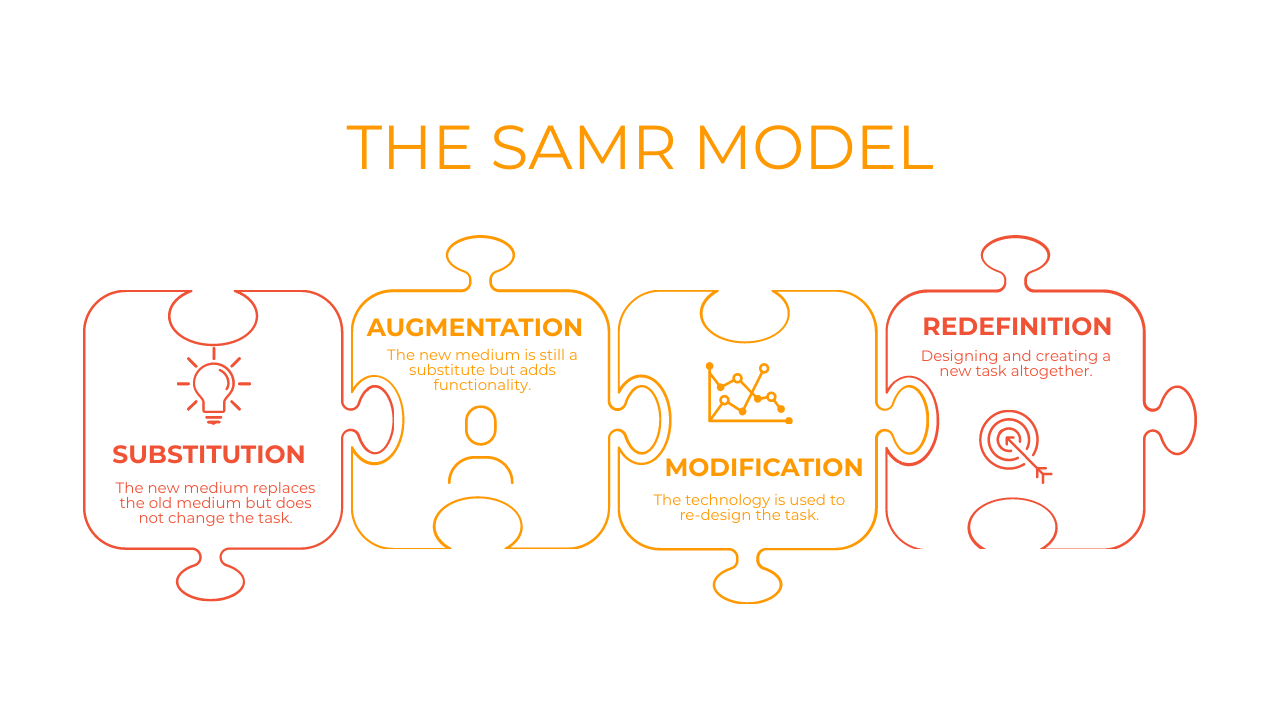

In other words, the technology becomes a tool for doing music rather than simply consuming it. My advice to any teacher who incorporates technology into their teaching is to always keep in mind the SAMR Model - conceptual framework created by Ruben R. Puentedura, which is similar to Bloom’s Taxonomy in many ways. The SAMR Model is an acronym for Substitution, Augmentation, Modification, and Redefinition. Substitution is when you swap an analogue option for a digital one - where the technology simply replaces a traditional tool without changing the task itself. Augmentation adds significant functionality to the equation. Modification is when technology actually redesigns or re-conceptualizes the task, and Redefinition is when the task is completely novel due to the technology. When we designed MusicFirst and MusicFirst Elementary our designers and programmers were always trying to get to Redefinition, and when not possible, at least aiming for Augmentation. In my opinion, MANY ed tech tools embody Substitution and I think that type of integration is what the authors of this article are talking about - and rightly so.

The concerns raised in The Digital Delusion and the New York Times article highlight an important truth. Technology alone does not improve learning. In some cases it may even distract from it. What matters is how technology is used. If a device becomes a delivery system for endless content, students may spend hours staring at screens without developing meaningful skills. But when technology supports active practice, creativity, and feedback, it can strengthen learning rather than weaken it.

Music education provides a clear example of this distinction. Students cannot learn to perform by watching videos alone. They must practice, experiment, and listen carefully to the results. The best educational technology does not replace those experiences. It helps make them possible. As educators continue to evaluate the role of digital tools in classrooms, the goal should remain simple. Technology should help students think, create, and engage more deeply with the subject they are studying. When that happens, screens become instruments of learning rather than distractions from it.